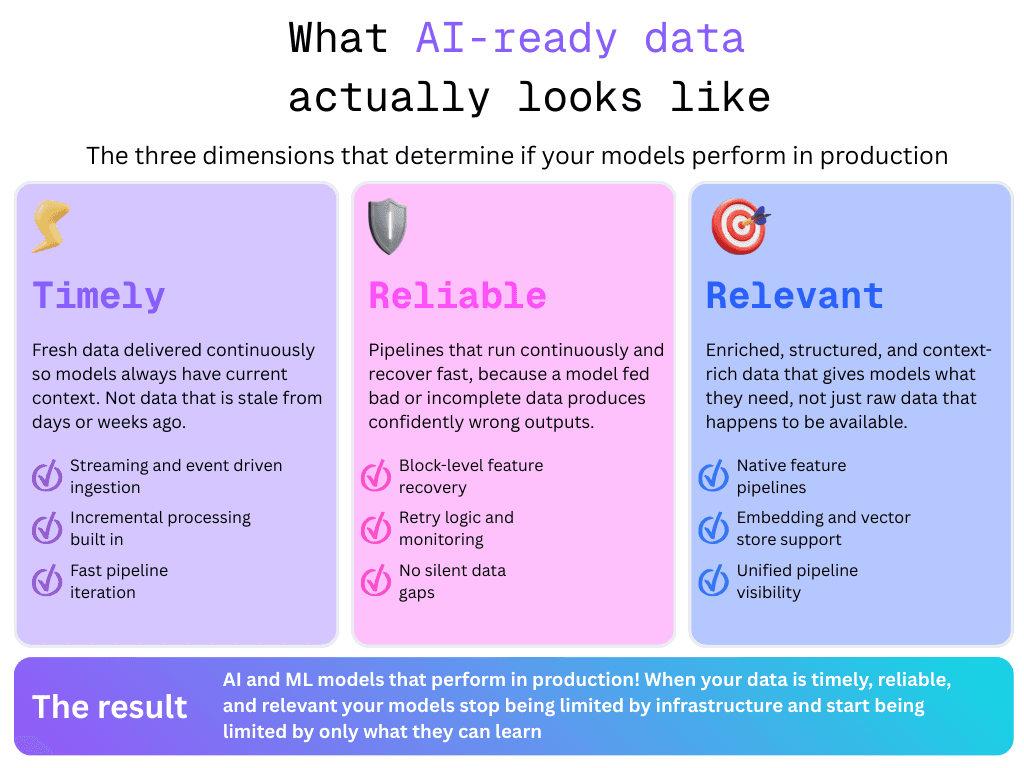

As machine learning and large language model (LLM) systems move from experimentation to production, the infrastructure required to run them reliably becomes more complex. AI applications are no longer just about models and prompts. They depend on continuous data pipelines that ingest data, generate features, produce embeddings, and deliver fresh context to models. In other words, data should be timely, relevant, and reliable.

For many teams, the first instinct is to reuse existing orchestration tools like Airflow. After all, Airflow has been a standard for orchestrating ETL pipelines for years. But when teams begin running ML and LLM workflows at scale, the limitations of traditional DAG-based orchestration quickly become apparent. What teams actually need is a platform that treats AI workloads as first-class use cases.

Not all platforms are built for AI

When evaluating data pipeline platforms for AI and machine learning workloads, three dimensions matter most: how timely, reliable, and relevant your data is when it reaches your models. Airflow remains solid for batch pipelines but shows its limits when AI workflows demand faster iteration, real-time data, and native ML support. Mage addresses these gaps with interactive development, block-level recovery, and built-in support for streaming and embedding pipelines. These native features make Mage the stronger platform for teams building AI and ML systems in production.

Timely data: keeping models fed with fresh context

AI systems are only as good as the data they receive at the time of inference. A recommendation model running on week-old behavior data, or an LLM using context that hasn't been refreshed since yesterday, will produce stale and often inaccurate outputs. This may cause AI systems to hallucinate and provide inaccurate information. For production AI systems, timely data means your pipelines can ingest, process, and deliver fresh information continuously.

Airflow's cron-based scheduling model works well when data moves predictably, but struggles when AI workloads demand more. When a feature pipeline needs to trigger the moment new data lands, or an embedding store needs to update incrementally as documents change, teams end up writing significant custom logic to get there. DAG updates require a full redeploy and scheduler re-parse, which makes fast iteration on pipeline logic an operational burden rather than a routine task.

Mage handles all of this natively. Streaming and event-driven pipelines are first-class capabilities, not workarounds. Incremental processing is built directly into pipeline blocks, so your models receive fresh features and context without reprocessing entire datasets on every run.

Reliable data: Pipelines your models can depend on

Incomplete or silently failed data is more dangerous than no data at all. Models that are fed bad inputs will produce confident but wrong outputs. Reliability means your pipelines run consistently, failures surface quickly, and recovery is fast and precise.

Both platforms offer retry logic and scheduling, so neither leaves you without a safety net. Where they diverge is operationally. In Airflow, a failed task typically means tracing back through the DAG, identifying the exact failure point, and rerunning from there. The process to debug failed code in Airflow is complex and time-consuming. Airflow's mature ecosystem of monitoring integrations helps, but the debugging burden lands on the engineer.

Mage makes debugging your code simple. Block-level reruns mean when something breaks, you rerun that block alone. You don’t need to rerun the entire pipeline. For data teams managing multiple AI workloads in production, that speed of recovery adds up. It also makes root cause analysis significantly easier when data quality issues need to be traced back to a specific transformation step.

Relevant data: Delivering the right context to your models

Timely and reliable data is necessary but not sufficient. The data reaching your models also needs to be relevant. It should be properly engineered, enriched, and structured for the specific workload. For ML systems that means well-built feature pipelines. For LLM systems that means up-to-date embeddings and retrieval-ready context that reflects the current state of your knowledge base.

Airflow can orchestrate these workflows, but feature pipelines and embedding refreshes are not native concepts. An Airflow developer will require knowledge of custom operators, external libraries, and orchestration logic that grows more complex with every new model or use case added to the stack. Teams often end up maintaining more pipeline infrastructure than model infrastructure.

In Mage, building a feature pipeline, refreshing a vector store, or wiring up an LLM inference workflow are native use cases supported by the block architecture. Each step is modular, independently testable, and reusable across pipelines. The unified pipeline view gives engineers and decision makers clear visibility into what data is flowing into models, where transformations are applied, and what context is being delivered at inference.

Where Airflow still wins

Fairness matters in any platform comparison. Airflow has a decade of production use and one of the largest open-source communities in data engineering. Teams with existing DAG infrastructure, established monitoring integrations, and deep institutional knowledge of Airflow face a real migration cost when moving to a different workflow application. Its production observability ecosystem is also more mature than Mage's today. The case for Mage isn't that Airflow is broken, it's that AI workloads have requirements that Airflow was never designed to meet, and the workarounds required to get there add up quickly at scale.

Conclusion

The bar for data infrastructure has risen alongside AI adoption. Timely, reliable, and relevant data aren't just abstract goals. These are necessary operational requirements for AI systems that actually perform in production. Mage was built to meet those requirements natively. Airflow asks engineering teams to build toward them through custom tooling and additional complexity. If your organization is running AI or ML workloads today, it is worth an honest evaluation of whether your data platform is keeping pace. Schedule a free demo with Mage to see how it handles your AI and ML workloads firsthand.

Authors: